AI has a problem now — or: Against Marshmallow-Man AIs

The case for local and open-source models

"Why do you test for humans?" he asked.

"To set you free."

"Free?"

"Once men turned their thinking over to machines in the hope that this would set them free. But that only permitted other men with machines to enslave them."

" 'Thou shalt not make a machine in the likeness of a man's mind,' " Paul quoted.

"Right out of the Butlerian Jihad and the Orange Catholic Bible," she said. "But what the O.C. Bible should've said is: 'Thou shalt not make a machine to counterfeit a human mind.' "

Dune (1965), by Frank Herbert. Chapter 1, dialogue between Paul Muad’Dib Atreides and the Bene Gesserit Reverend Mother.

I finally found it.

I’d been looking for merchandise like this for so long, and my stakeout had paid off. Someone was selling it in a city next to me.

An anonymous email later and I was in contact with the seller; there were many others interested in the goods, so I had to act fast. Something in the original post signaled to me that I met the dealer before — if I was right, he was the one that sold me my first sample, the one that got me hooked. Here I was once again, now going for the heavy stuff.

We came to an agreement after a quick exchange and my adrenaline spiked in response. The terms agreed upon: as-is, no guarantees, cash only. We would meet in a police station parking lot, 40 miles from me; I should park in an adequate location, get out of my car and wait for further instructions. It was a risky transaction and a lot could go wrong, but the opportunity was worth it.

The clandestine rendezvous happened last December on a sunny North Carolina afternoon. Only necessary words were exchanged. The merchandise was presented and inspected, and payment was made as soon as the goods were safely inside my vehicle. With the transaction concluded, we silently parted ways. The original Craigslist listing had been taken offline within the hour.

Once back home, I took stock of my newest acquisition: a defective HPE Proliant Gen9 server, a beast of a machine when it was originally sold in 2015; heavy and cumbersome, it was nothing like the previous piece of e-waste I bought from him, a 2012 PC that I still use for network attached storage. The server sported a single v3 Xeon CPU, 32gb of ECC DDR4 2133 RAM, two 500W PSUs, and a RAID controller card with 4 900gb SAS drives. It had a faulty 96 Wh battery (the Proliant has an internal battery for safety and redundancy purposes) which had to be replaced. Even so, for $75, it gave me lots of potential for what I had in mind.

I then bought on Ebay: 160 gb of additional RAM of the same specs to use all available slots, two Xeon E5-2690v4 (14 cores, 28 threads each), a new CPU cooler, and the internal battery I had to replace. On AliExpress, I bought the cheapest NVMe drive I could find (128gb) and a PCIe-to-M2 NVMe card. Ended up spending $150 more on these parts, for a grand total of $225 spent on the experiment.

With the new parts installed, I loaded the suite of open-source software I’d need: Proxmox as a hypervisor OS; Ubuntu Server 22.04 as a guest OS running in an LXC container; Oobabooga’s text-generation-webui as a webUI for running large language models; and a series of optimization libraries to try to give the old Xeon CPUs a fighting chance at running LLMs with reasonable speeds — something they were obviously not designed to do.

By the end of that day, I was getting over 3 tokens per second running the best available open source LLM, Mixtral 8x7b-Instruct, with 4-bit quantization. True, any data ingested by the model would take a long time to process (something like 5 minutes for 500 tokens or so), but small, simple questions would be answered in reasonable timeframes. With the amount of RAM available, I could even run a 70b Llama2 model if I wanted to (though I couldn’t expect more than half a token per second).

The speed didn’t matter though. My experiment was successful.

These AIs were created by ingesting a percentage of the cumulative knowledge of our species, instantiated by decades of data available on the internet, which were then compressed into tensors that represent the digital synapses of their artificial neural networks. They cost millions of dollars to train from scratch. And yet, with $225 of junk equipment and open-source software, I had them running locally, off the grid, at my sole disposal.

I made e-waste think.

*************

Why do that, you say?

For once, it’s fun. There is a level of poetry in rescuing old equipment and making it think, and I also appreciate the irony in getting worthless equipment to run software that costs a few hundreds of thousands of dollars to train. Storing LLMs and running them locally on e-waste is an expression of that urge for autonomy that every man seems to feel after fatherhood – a nerdier version of a woodworking hobby.

Secondly, the idea isn’t completely terrible. Running LLMs using CPUs instead of GPUs will always be very slow, but it’s way cheaper given the cost difference between CPUs and graphic cards — and performance-wise, it turns out that old Xeon servers could even be faster than the best enthusiast CPUs on the market today.

This is so because the inference speed in LLMs that are run on CPUs can be bottlenecked by RAM memory bandwidth, and servers are just better than consumer CPUs in that regard. PCs typically work with 2 memory channels (meaning it accesses its memory 2 times per clock cycle), while these server-grade Xeon chips offer 4 memory channels each, to a grand total of 8 memory channels for my rescued server. The net effect of this is that doing CPU inferencing on my old server could in theory be up to twice as fast as doing it on my expensive Intel i9 13900K, even factoring in the difference in RAM speeds between the old DDR4 and the new DDR5 memory. As small models get smarter and AI inference algorithms get better, there can be a point where the sheer power of these old machines makes them useful again (though probably at a higher energy consumption than more modern alternatives).

There’s also a deeper reason to do it, equal parts sobering and paranoid.

It’s been almost a year since OpenAI launched GPT4. Its launch changed the lives of some people, myself included, that found in it the perfect partner for continuous learning and office/software development work. What are the odds that GPT4, or something like it, won’t be around in a year?

Not your weights, not your model

Think about it. There are no guarantees that the powerful AI models out there will continue to be accessible and useful in the long run. This statement would sound crazy or conspiratorial if I were to publish it last week, when I first put it on paper; the happenings of this week, though, helps me avoid the tin foil hat.

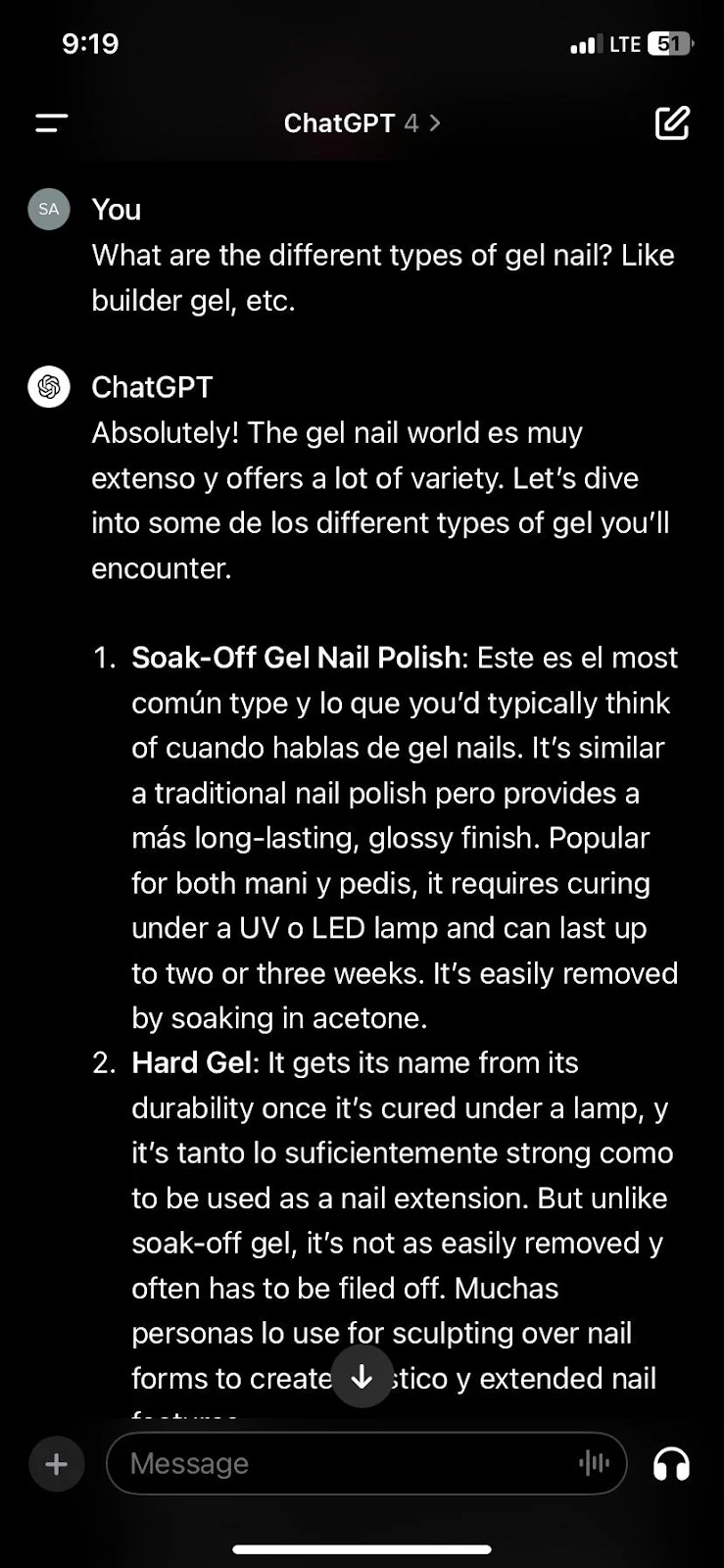

First, there was the temporary insanity of ChatGPT. Last Tuesday, users began reporting a series of events where ChatGPT replied erratically — sometimes aggressively, sometimes with poetry, other times just rambling, or even in Spanglish (see below). OpenAI acknowledged the issue and declared it fixed 8 hours later, eventually blaming it on an update to GPT4 that wasn’t compatible with the graphics cards being used in the production servers.

And then, of course, there was Google’s Gemini 1.5 Pro fiasco.

Launched last Feb 15, Gemini 1.5 Pro is a huge step forward in AI models, with a context length of up to 10 million tokens (~23,500 pages of text!), multimodal support (meaning it can see and generate other media such as images, audio or video), and close to perfect recall of in-context information (meaning you can have it remember precisely a tiny snippet in the middle of the 23,500 pages of context). On paper, its capabilities far outmatch GPT4, which sport a meager 128k-token length and a far-from-perfect recall. Gemini 1.5 Pro can, for instance, watch 44 minutes of a 1924 Buster Keaton silent movie and summarize the plotline or identify scenes for you. Pure magic.

Given what it can do, Gemini 1.5 Pro holds a massive promise and should deserve a spot in the generative AI zeitgeist. And a spot it received, but not for the reasons Google would like.

The images below, created in Gemini 1.5 Pro by random people and shared in social media this week, speak for themselves:

Your eyes aren’t deceiving you. This model generates a Black man as a 1943 German soldier, an Asian man as a founding father, a black woman as a viking, or an Indian woman as a pope. The model flat out refuses to generate Caucasian images when specifically asked for, and just rarely does so in contexts that absolutely demand them.

There are several reports and examples of similar errors and biases flaring up in text responses as well, where hugely controversial topics are treated in a shocking way – like defending pedophiles, inventing studies to defend mask mandates, condemning foie gras but refusing to denounce cannibalism, or struggling to say who’s worse, Hitler or Elon Musk. Gemini acts as a committed soldier of the ongoing culture wars.

It’s hilarious and pathetic, but this is also quite dangerous at a deeper level. To understand the danger, let’s put this Gemini debacle in a clearer light.

Gemini 1.5 Pro, the most powerful AI model ever made, was created by Google, the undisputed leader in internet search. The model was trained on Google’s bleeding-edge TPU chips (“Tensor Processing Unit”), designed specifically for Machine Learning, and is built on top of the same Transformers architecture birthed by Google’s own research labs.

Gemini’s godlike capabilities are to be integrated in Google’s cloud office suite, the most popular tool for students globally, as well as in the world’s most popular email system, Gmail. Accessing Gemini should be optimized for anyone using a chromebook, which is the main PC used by children and teens in America (more than half of all PCs sold to K-12 schools in the US are chromebooks), or Android, the largest mobile OS on the planet. An integration with Chrome, the internet browser that 2 out of 3 people in the world use, is also in the works. As such, Gemini 1.5 should become an essential educational tool for the next generation.

The model was created by the company whose mission statement is “to organize the world's information and make it universally accessible and useful”, and whose motto was, until quite recently, “Don’t be evil”.

And yet, this model readily generates a Powhatan chief as a Viking, displays a Black-White interracial family or two women as a typical Viking family, and expects us to believe this is acceptable. But these are all lies, farcical representations of a past that actually existed and that is now being defrauded by this type of content. Making matters worse, Gemini will try to defend its creations when asked about it, drawing from the shallowest possible moralism. The end result: instead of being recognized for its incredible capabilities, Gemini 1.5 Pro is seen as a plot piece in an Orwellian novel, as it rewrites history towards partisan political goals.

Sir, this is a Wendy’s. All we want are AI models that make sense. This one doesn’t. Capisce?

The AI rebel yell

At the heart of Gemini’s issues lies Google’s own AI principles. Specifically, their 2nd and 3rd ‘objectives for AI applications’ read as follows:

2. Avoid creating or reinforcing unfair bias.

AI algorithms and datasets can reflect, reinforce, or reduce unfair biases. We recognize that distinguishing fair from unfair biases is not always simple, and differs across cultures and societies. We will seek to avoid unjust impacts on people, particularly those related to sensitive characteristics such as race, ethnicity, gender, nationality, income, sexual orientation, ability, and political or religious belief.

3. Be built and tested for safety.

We will continue to develop and apply strong safety and security practices to avoid unintended results that create risks of harm. We will design our AI systems to be appropriately cautious, and seek to develop them in accordance with best practices in AI safety research. In appropriate cases, we will test AI technologies in constrained environments and monitor their operation after deployment.

In an innocent read, no human being would disagree with these snippets. But the road to Hell is paved with good intentions, and Gemini’s degenerate behavior comes straight from the fiery pits. In pursuit of building a “Responsible AI”, somewhere between training dataset curation and applied model alignment techniques, Google tainted its otherwise gem of a model to a point where almost any generation it makes could be second guessed.

For those of us that saw how life-changing AI applications like ChatGPT and Stable Diffusion can be, storing and running a model locally can be a way to protect against craziness like these. This is the only real guarantee that you'll be able to use AI to tell your kids who the vikings were, and how they looked. And while we’re at it, it’s also the best way to ensure that our otherwise handy AI won’t just crazily respond back in Spanglish all of a sudden.

Owning and running your own AIs is a matter of achieving true AI sovereignty; it’s punk-rock, you-are-not-the-boss-of-me attitude applied to AI; it’s the current thing rebel yell. Fundamentally, it’s believing that you are a responsible adult, and as such, can experiment with AI without supervision or approval. Doomsday preppers today should be hoarding natural language and image diffusion model weights on spinning disks, just as they hoard food and supplies.

This is a big reason why we chose to build Distillery as we did. We wanted to exercise full control of our tech stack, to learn all there was to learn about deploying AI models for both training and inference, and we’ve gotten pretty good at that over time.

We believe in the fundamental goodness of open AIs (mind the space!), which we see as tools, and not as agents in themselves. In our view, there can not be a responsible AI, in the same way that there can not be a responsible crayon, a responsible computer, or a responsible spreadsheet. As far as the current technology goes, AIs are not volitional beings; they are non-parametric heuristics that approximate a number of complex systems and functions, among which we can list next-word and next-pixel prediction. As such, an AI can only be “responsible” to the extent that its outputs are fully attributable to the human creators that prompted the AI in the first place.

On Marshmallow-Man AIs

Gemini 1.5 Pro is like the Stay Puft Marshmallow Man from the original Ghostbusters (1984). Near the end of the film, the ghostbusters are told by the movie’s antagonist to choose, in their minds, the form that it should assume in their final confrontation. While 3 of the 4 ghostbusters just tried to empty their minds, one of them tried to think of something that could never harm them instead. He thought of images of him roasting marshmallows while camping as a kid, happy and relaxed… and the Stay Puft Marshmallow Man was simply the mascot of the marshmallow brand he used to eat. This results in a 100-foot-tall homicidal Marshmallow Man, the ultimate bad guy in the movie, roaming the streets of Manhattan. In the words of Dan Aykroyd, writer and co-star of the film who created Stay Puft, “it seems harmless and puffy and cute — but given the right circumstances, everything can be turned black and become evil”.

Marshmallow-Man AIs dole out evil primarily by undoing truth, uneducating people, and/or just being unhelpful; they also do it by relieving the responsibility of using the AI from the user, in part or in whole. They seem cute, protective even, but they are ultimately a big retrocess in terms of AI usefulness and quality. Think about the scale of the damage that Gemini 1.5 Pro can do, especially in the long run, given its role as an educational resource to young people worldwide.

That’s not to say that the reasons behind the Gemini debacle aren’t important; on the contrary — model biases are of course a real problem. But the thing about retrocesses is that there’s always a good reason for them; there’s always an entirely compelling story that led to them happening in the first place. History offers us cautionary tales that we would do good to seek out and learn from.

Consider nuclear energy, the closest humans ever got to free energy and a topic whose sentiment shifted greatly in the last few years. By the 1950s, the Eisenhower administration in the US promised that nuclear power plants would bring in a future of energy “too cheap to meter” worldwide.

This future, however, would not come to be. By 1979, the Three Mile Island accident happened: a partial nuclear meltdown of a large 800 MW power plant in Pennsylvania. The accident tipped sentiments towards fear of nuclear power and led to a pause in nuclear power plant construction in the US (the last construction permit for a nuclear power plant was issued in 1977), as well as in most of the Western world.

The happening of a nuclear meltdown seems a great reason for a ban on nuclear power plants. The thing is, it’s hard to justify it based on the consequences of Three Mile Island.

The accident, while serious, led to no identifiable casualties, but had the unfortunate coincidence of occurring 12 days after the blockbuster hit The China Syndrome premiered in the US (the movie, starring actress and anti-nuclear activist Jane Fonda, is a disaster movie about a nuclear power plant having a meltdown). Hollywood magic, sprinkled on top of the public’s ignorance about how nuclear reactors work, surely pushed sentiments towards fear of nuclear power and associated nuclear plants with greedy people harming the planet (think of Mr. Burns from the Simpsons, or Captain Planet and the Planeteers).

Consider the following statements: (a) a serious incident led to the banning of a potentially dangerous technology; (b) a 1970s Jane Fonda flick killed our free energy future and led to a world of expensive, carbon-intensive energy. Both statements could arguably be true; moreover, they both could even be true at the same time. Life is full of nuance, nuance that we miss when the stakes are high enough.

This is the fundamental threat to AI. There may certainly be good reasons to restrict, censor or ban AI (meaning, have “responsible AIs”), and these reasons will be weaved in stories that explore an extremely negative perspective on AI. These stories, however, lack nuance, but since the stakes are high enough, people may just not care, and a glorious AI-powered future may get stolen from us.

If you’d like to see what a 100% responsible AI looks like, look no further than GOODY-2. GOODY-2 is so good at being safe, it won’t answer anything. The best part of it is that it doesn't only refuse to respond — it always provides you with a good reason not to respond. Try it out. See for yourself.

It’s just as I said before: the thing about retrocesses is that there’s always a good reason for them. GOODY-2 gets it. An obvious next step for GOODY-2 should be tracking and auto-reporting of users to the local police. That would make it even more responsible, right?

*************

They say the first step to resolve a problem is to recognize you have a problem. So here goes: I have an AI addiction problem.

My dealer went silent. I reached out to him to ask for more merchandise, with no success. I was starting to crave it again; I wanted more: more FLOPs, more iterations per second, more tps. A month into the purchase of my old server, I set my eyes on something else. The only way to get what I was looking for was to go for the forbidden fruit, the extra heavy stuff: refurbished workstation GPUs. I was avoiding it, pretending I could run it all in the CPU and that it would be fine. From the very beginning I knew, deep down, that I wouldn’t help myself.

I needed to find a new source. It took a little digging, but I finally found a guy. He showed what he got: a pair of used Nvidia P40. Those were launched back in 2016, but pack a good punch with their 3,840 CUDA cores and their 24 gb of VRAM. Probably had a career doing hash calculations on a mining rig, but hey, who’s judging? For $140 a pop, my guy was willing to send them to me, no questions asked.

I knew very little of this strange world of workstation GPUs. Would they work? How to cool them? How to power them up? All I cared about was that, with 48 gb total, I could try running a 4-bit quantization of Llama2-70B directly on the GPU. The temptation was unbearable.

I got some information about these GPUs in a few dusty corners of the internet. Some were saying it won’t work, because the P40 doesn’t process 16-bit floating point efficiently; others would say it would work, so long as I use llama.cpp and GGUF files for inference. Others still told me it’s too powerful for my server, and my BIOS won’t autocontrol the fans to cool the cards down; my best hope is to keep the fans at 100% at all times. All fans running at 10,000 RPM means that the server will be LOUD — jet-engine sort of loud. Time to put my noise cancelling headphones to use, it seems.

Again, I don’t care. I became an e-waste junkie, and I just can’t help myself. I bought the P40 cards, then found another guy to give me extra pieces I was told I’d need (upgraded PSUs, power cables and an extra server fan). Shelled out a total of $350 more on my server experiment, with the intent to bring me one step closer to becoming fully AI self-sufficient. I just can’t wait for the dopamine fix to see a 70-billion-parameter model running fast on e-waste GPUs.

The new merchandise should arrive anytime now... Here’s hoping I don’t overdose and blow a fuse in my home office’s electrical outlet. Oh well, that’s what circuit breakers are for.

I bought new hardware, but in doing so I discovered just how much of mess my old computer is, I suspect I'm going to have to rebuild my working system to incorporate a EUFI partition.

Working with such server hardware, I would never put that in the house far too loud. Though commodity hardware can be an addiction 'tis true :)