[Part 1] Pair Programming With LLMs on the Example of Image Generation Service (Distillery)

Series to unlock full power of coding with LLMs for non-developers

Hello FollowFox Community!

With this post we want to go back to how our blog started - just a step-by-step disclosure of the explorations we do, regardless of the difficulty level.

In this post, we will learn how to master programming with LLMs to understand and create sophisticated services even if you are not a developer. This series will be all about the journey from how we start from a pretty large codebase and with the help of LLMs, learn how it works, what are all the individual parts, and how to improve, document, and contribute to it while being a non-developer.

About the Idea of Pair Programming

Many of you might know that last year, FollowFox launched its first public-facing service called Distillery (link), which for now is a Discord Image Generator built around Stable Diffusion and related technologies. Throughout multiple updates, Distillery turned from a service with close to zero features beyond basic text-to-image generation to one of the most advanced services that allows users to use chat interface to interact with pretty much all advanced image generation features such as ControlNets, Image-2-image generations, IPAdapters, combine multiple LoRAs, train own LoRAs with as little as single image, do inpainting, image masking, use LLMs for prompting and a lot more. Check our user documentation for the full list (link).

Every individual part of Distillery is either coming from an Open-source community or we have created and shared it with the community (such as our Vodka-based models link, or ComfyUI-serverless link). However, all those individual parts need to be tied together and that needs a lot of code to create a truly scalable service that today is serving over 12,000 users. We wrote an overview of that code and how it was built but we have yet to share all of this code due to reasons also discussed in the same post (link).

Team Roles at Distillery

While Distillery is a team project, Felipe is the true mastermind behind most of the code running the Distillery - he has devoted hundreds of hours to it and built a scalable and stable service with tens of thousands of lines of code.

Meanwhile, I have contributed to some individual parts of the distillery on the code side but I never gained a deep and holistic understanding of the codebase. In this series, we will attempt to change that.

About my Coding Skills and Experience with LLMs

I have some Python coding experience, especially in data analytics, given my background. For the same reason, I’m quite comfortable with SQL. For the past year I have been using ChatGPT for all my coding needs however this experience was usually limited to relatively simple, standalone scripts for various utilities rather than a more complex system creation.

Setting the Goals

As usual, we emphasize the importance of setting some goals ahead of time.

On a personal level, my ultimate goal will be unlocking the full potential of LLM-enabled coding capabilities as a skill especially since we all expect that LLMs will get better and better for such assistance.

A more immediate personal goal is mastering Distillery’s code and this has a few obvious benefits - from enabling more direct contributions to risk diversification away from a single point of failure and thus relieving the pressure from Felipe.

On a more tactical level, throughout this series we will figure out the optimal way to use LLMs for working with all of Distillery’s code and doing the following tasks to achieve the above goals:

Understanding the overall structure and logic of the codebase

Deep dives into individual parts of the code

Attempt to create more technical documentation

Ambitious goal 1: Attempt to simplify code, write test cases, debug, identify the tech debt, or make the code more efficient

Ambitious goal 2: Take one of the ideas from the backlog and directly implement it in the production code of Distillery

Last but not least - we think these series can serve as the path towards fully open-sourcing Distillery.

Choosing the Tools for LLM Pair Programming

So far I have only used ChatGPT’s UI for coding purposes - I prompt about the task I need to perform, start with the code it provides, paste back errors, iterate in such a manner, and typically after a few tries I accomplish my task of creating the needed script.

This time, my initial plan was to figure out the most advanced LLM-enabled coding setup that’s out there and simply adopt it. However, finding what that even meant was taking a lot more time than I’m typically comfortable to spend on getting started so I abandoned the idea.

Instead, I decided that for the start we would be using Anthropic’s Claude 3 Opus (link) via their webUI and thus I committed to their pro subscription. Their superior results on coding benchmarks paired with the large context length (to input all the Distillery code) along with the simplicity of this path were the main reasons behind this decision.

As we go through this journey, there is a high likelihood that we will go beyond Claude’s web interface.

Preparing the code for an LLM

To get started with the understanding of the code, we ideally want LLMs to understand the whole codebase - just pasting the small chunks of the code simply do not work for this task. Thanks to increased context length, we are able to do this - we just need to somehow attach the whole code to the LLM chat UI.

Note that in this case, we find using the chat interface actually preferable - if we were to do this via API, we would be charged for all the tokens of the provided codebase for each request - while with the monthly subscription, we just attach the PDF to the chat and talk it over with LLMs.

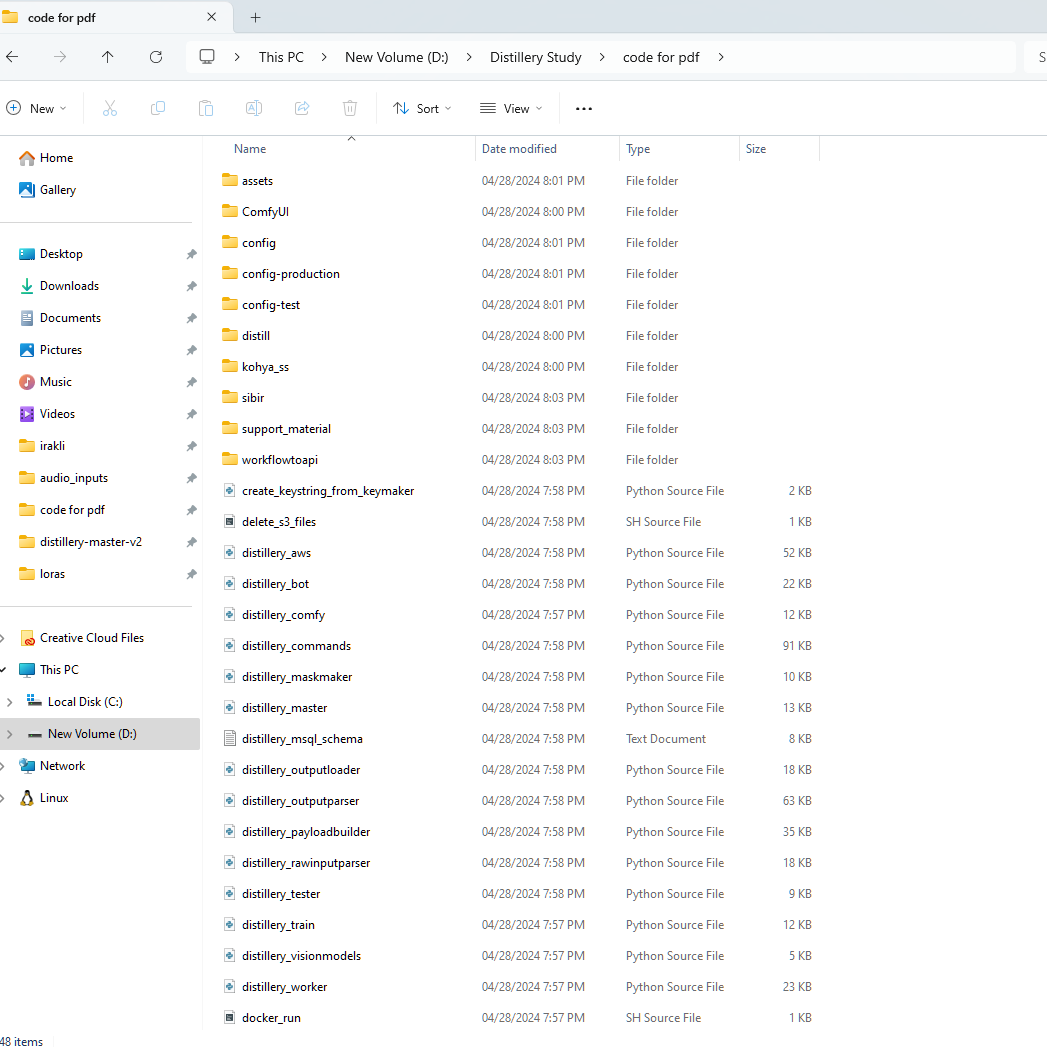

As you might remember from the previous posts, the majority of the Distillery code is in Distillery Worker and Distillery Master repositories. So we created the local copies of both (simple git clone command), removed anything sensitive such as passwords, and then pasted all the files into a single folder. It looks something like this:

As you can see there are way too many files, so we will use a special tool to turn this into a single, giant PDF file. repo2pdf seemed like a perfect tool for the task so we decided to use it https://github.com/BankkRoll/repo2pdf.

In the end, we had a 650KB file with ~350 pages. We will attach this to the LLM chat and see what happens.

Claude was not happy about the file size while ChatGPT accepted it but got confused by the initial parts of it so we have to go back to our PDF, see what can be further removed.

Right away we see that the base64 at the start is from the font file, so we delete the SpaceMono-Bold.ttf file. Clause was still complaining about the length so we deleted a few more files that were titled as testers to concentrate on the main code. In the end we were left with 225 pages in PDF with the code and both ChatGPT and Claude were happy to use these files.

Initial Exploration

At the beginning of this journey, we tried to look for educational resources on this topic. There are some videos on YouTube (including this Harvard CS50 Tech Talk with Dr. Matt Welsh which ended up a total waste of 1 hour), a few blogposts, and some courses. We tool the short course from deeplearning.ai called Pair Programming with a Large Language Model (link) - it is useful but very basic, we will mostly use it as an inspiration for some prompts.

After attaching the PDF, our request was fairly basic and straightforward:

Can you please explain how this code works?

Use a lot of detail and make it as clear as possible.

ChatGPT ended up being very lazy (as of April 28th, since LLMs tend to have varying moods over time). It clearly reads only the initial part of the file, and we had to prompt it again and again until getting something useful. This can be really problematic if you are not familiar with the code at all, since LLM’s response will direct you in the wrong direction. (link the the chat)

On the other hand, Claude’s response took much longer to be generated and the quality of the output for this particular task was significantly better. However, it also came with an annoying warning: 6 messages remaining until 2 AM.

Let’s take a look at Claude’s response and discuss it, as it can give us the structure for what we will be doing in this series.

The intro is very much on point:

This code consists of several Python scripts, configuration files, and supporting materials for a Discord bot called "Distillery" that interacts with a generative AI model running on the Runpod platform to create images based on user prompts. Here's a detailed explanation of how the system works:

Then it outlines the core components:

Main Components:

Distillery Bot (distillery_bot.py): This is the main Discord bot that listens for slash commands from users, validates the requests, and pushes them to a queue for processing.

Distillery Master (distillery_master.py): This script pops requests from the queue, sends them to Runpod for image generation, saves the generated images to Amazon S3, and pushes the image URLs to a send queue.

Distillery Commands (distillery_commands.py): Contains the code for handling different commands like "/serve", "/zoomout", "/upscale", etc. It parses user input, creates payloads for the AI model, and interacts with the Master and Bot via a MySQL database.

Distillery Worker (distillery_worker.py): Runs on the Runpod platform and receives payloads from the Master. It uses the ComfyUI library to generate images based on the payloads and returns the image URLs.

The main components were outlined in a very clear manner, we should probably start reviewing these documents first.

Then it listed out supporting files and configs:

Configuration and Supporting Files:

config.json: Contains various configuration settings like API keys, database connections, command parameters, etc.

set_env_variables.sh: Sets environment variables used by the scripts.

distillery_aws.py: Provides functionality for interacting with AWS services like S3, RDS, and CloudWatch.

distillery_rawinputparser.py and distillery_outputparser.py: Parse user input and create properly formatted payloads for the AI model.

distillery_payloadbuilder.py: Builds the final payload sent to the worker.

Dockerfile and docker_run scripts: Used for containerizing the bot and master.

Then it describes how the overall workflow works. We can cross-check this with our previous post (link):

Workflow:

1 - A user sends a slash command (e.g.,

/serve prompt) to the Distillery Bot on Discord.2 - The Bot validates the request (checks permissions, throttling limits, etc.) and pushes it to the GenerationQueue in the MySQL database.

3 - The Distillery Master continuously pops requests from the GenerationQueue.

4 - For each request, the Master does the following: a. Parses the user input using distillery_rawinputparser.py and distillery_outputparser.py. b. Builds a payload using distillery_payloadbuilder.py. c. Sends the payload to the Distillery Worker on Runpod via the Runpod API. d. Receives the generated image URLs from the worker. e. Saves the images to Amazon S3 using distillery_aws.py. f. Pushes the image URLs to the SendQueue in the MySQL database.

5 - The Bot continuously checks the SendQueue.

6 - For each item in the SendQueue, the Bot sends the generated images back to the user on Discord.

This seems very simple and easy to follow and makes a good sense of what’s happening in Distillery. If anything, it doesn’t talk much about what happens on Runpod via ComfyUI but that code is not directly here to be fair.

Finally, it lists out some additional features:

Logging: The system uses AWS CloudWatch for logging various events and errors.

Caching: AWS Memcached is used for handling concurrent requests and avoiding conflicts.

Database: MySQL (AWS RDS) is used for storing request data, user information, and queues.

Containerization: The bot and master are run inside Docker containers.

It also came with a short conclusion that LLMs tend to love to do:

This is a high-level overview of how the Distillery system works. The code is modular and uses various libraries and AWS services to handle different aspects of the workflow. The configuration files allow for customization of commands, AI models, and other parameters.

Plan for The Next Steps

We will use this post for setup purposes so no code deep-dives here. Instead, let’s plan out the future posts following LLMs suggestions:

Understand the high-level architecture and workflow (We did this, at a very high level)

Analyze the configuration and environment setup (we might move this lower in priority)

Deep dive into the Distillery Bot (likely the next post)

Deep dive into the Distillery Master

Deep dive into the Distillery Worker

Database and AWS services integrations

Create technical documentation (this is optional, let’s see if we get to this)

Code simplification and optimization (this is also optional)

Implement backlog ideas (we will try out best to implement at least 1 idea through this series)

Hope you find this interesting and exciting, hope you will join us on this journey and learn a thing or two.